Kinect4Azure for VRTogether Volumetric Capture

Since Microsoft announced their plans for the release of a new Kinect RGBD sensor, the entire technological community has been waiting patiently. It was unfortunate that the production of the previous Kinect v2 halted, as it was considered one of the best, with respect to the depth estimation quality, low-cost RGBD sensors. The continuation of the MS Kinect series gave insights to the computer vision community.

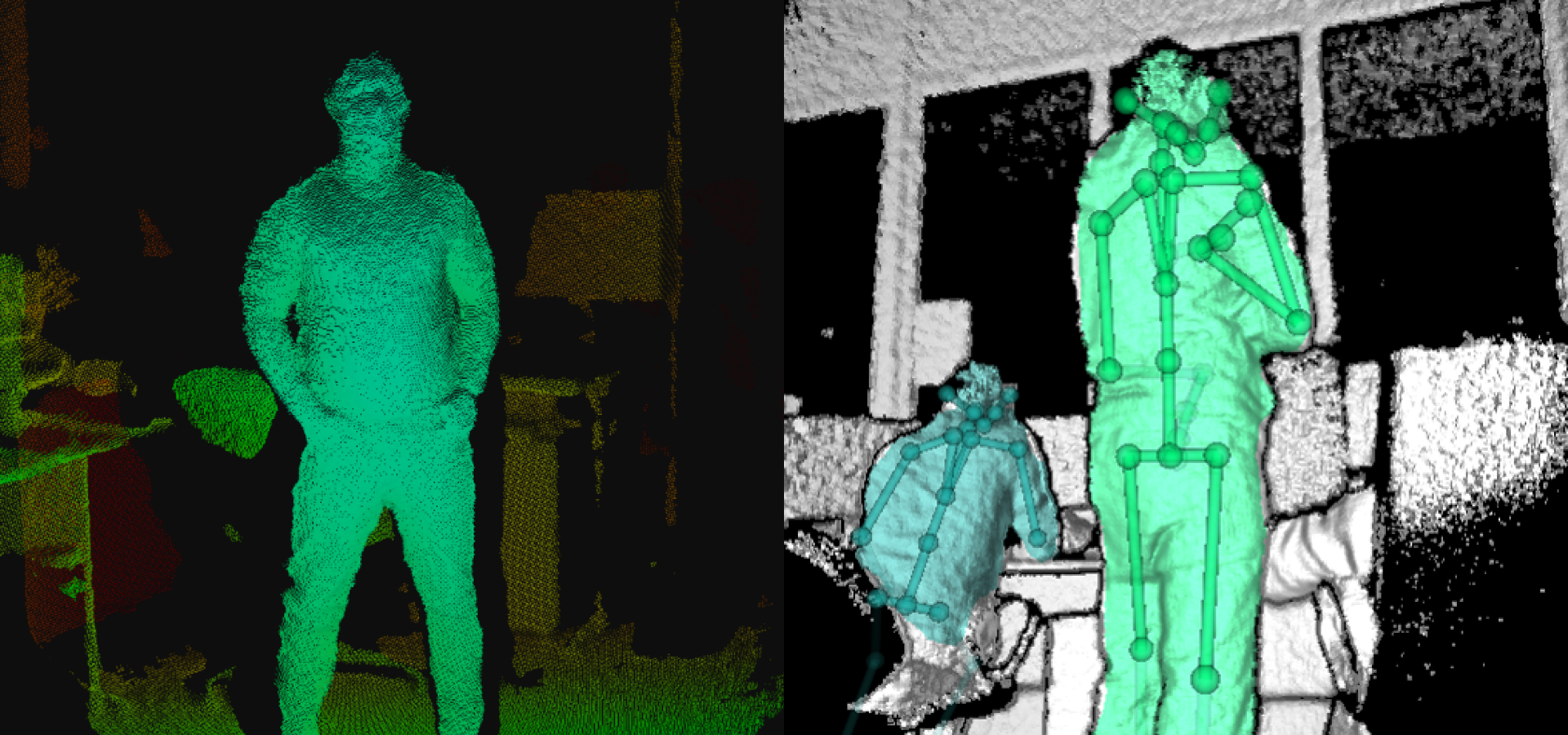

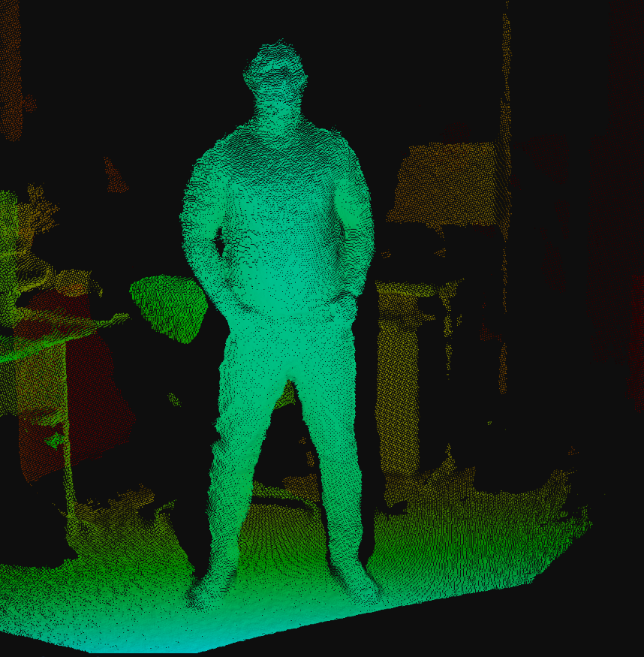

The new Kinect4Azure (K4A) kit includes a 12 MPixel RGB camera, supplemented by 1 MPixel depth camera. Supplementary utilities are provided such as, real-time skeleton tracking, a 360-degree seven-microphone array and an inertial sensor (IMU). The aforementioned utilities, in addition to the top-notch depth quality of the new Kinect4Azure (K4A), are expected to boost a wide spectrum of computer’s vision research fields.

The EU Project VRTogether, provides an immersive experience for multiple remote users. The objective of the project is to provide a VR experience where the users can see themselves (user representation), other users and interact with them inside the VR space. To achieve that, reconstructing the users in real-time is a prerequisite.

Since the announcement of the new Kinect4Azure (K4A), VRTogether and, in particular, CERTH awaited the release of the sensor. The quality of the representations is critical for immersing each user to the virtual experience. Better quality provides a more real-like experience, as fine details of the representations add more reality features to the experience like facial expressions, which capture the emotional state of each user.

Pilot 3 is going to be released during the 3rd year of the project. Our expectations, comparing to the volumetric videos of users from previous pilots software, include higher quality human users representations based on the ToF technology of the Kinect4Azure (K4A), leading to the peak of the immersive experience until the end of the project.

Aside from the EU VRTogether project, the exploitation of the Kinect4Azure (K4A), like real-time human 3D reconstruction, volumetric captures and videos and other related applications is already considered a future fact.

Authors: Anargyros Chatzitofis, Vasilios Magoulianitis – CERTH

Come and follow us in this VR journey with i2CAT, CWI, TNO, CERTH, Artanim, Viaccess-Orca, Entropy Studio and Motion Spell.

This project has been funded by the European Commission as part of the H2020 program, under the grant agreement 762111.