Mentoring Students in Social VR (Part II)

For more details, see previous post “Mentoring Students in Social VR”

The VRTogether project conducts ground-breaking research on Social VR, which invites multiple users to share a virtual space and interact with each other’s virtual representation. Apart from the main research activities, this project mentors master’s students in this area, forming a new generation of graduates that can, in the future, reshape the media landscape. CWI has been actively offering master’s thesis around the core topics of the project since 2018.

In 2018, two students graduated at TU Delft with the theses “Multi-Camera Registration for VR: A Flexible, Feature-based Approach” (Qian Qinzhuan) and “User Experience in Social Virtual Reality” (Yiping Kong). The latter resulted as a top-quality paper, “Measuring and understanding photo sharing experiences in social Virtual Reality” at ACM CHI 2019. In 2019, another two students graduated: Guo Chen (TU Delft) with the thesis “Designing and Evaluating a Social VR Clinic for Knee Replacement Surgery”, and Jelmer Mulder (VU Amsterdam) the thesis “Temporal Interpolation of Dynamic Point Clouds using Convolutional Neural Networks”. Guo’s work was published as an ACM CHI 2020 late-breaking-work and an improved demo of Guo’s work was published at ACM IMX 2020, which received the best demo award. Jelmer’s work was published as an invited paper in the 2019 IEEE Conference on Artificial Intelligence and Virtual Reality (AIVR).

In 2020, again two students graduated: Yanni Mei at TU Delft with the thesis “Cake VR: Design a Social VR Tool for Remote Co-Design of Customized Cakes” and Ignacio Reimat at Universitat Politecnica de Catalunya with the thesis “Temporal Interpolation of Human Point Clouds Using Neural Networks and Body Part Segmentation”. Both students graduated with score 9 (out of 10). We are currently working on publishing the students’ work to prestigious conferences.

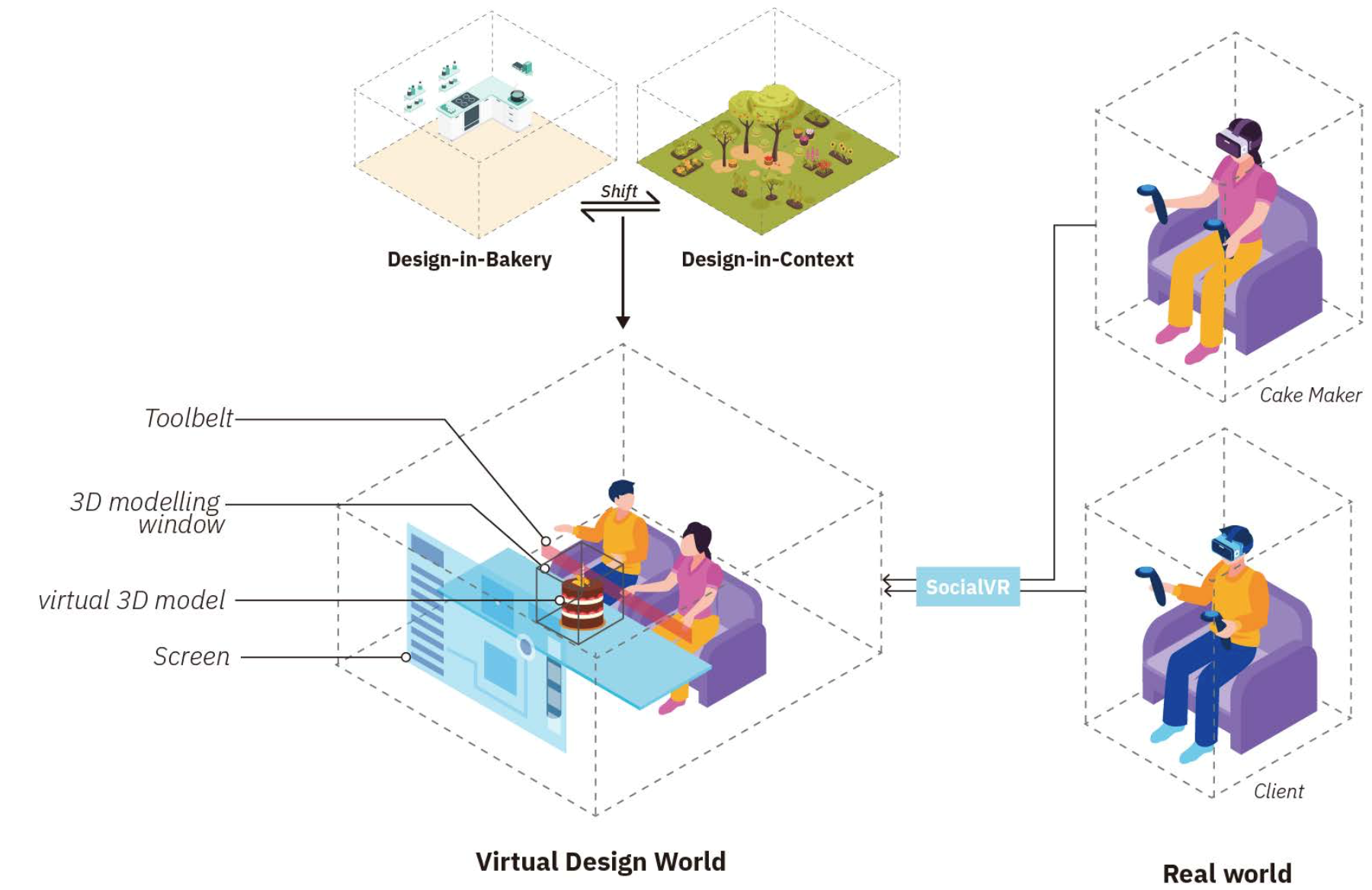

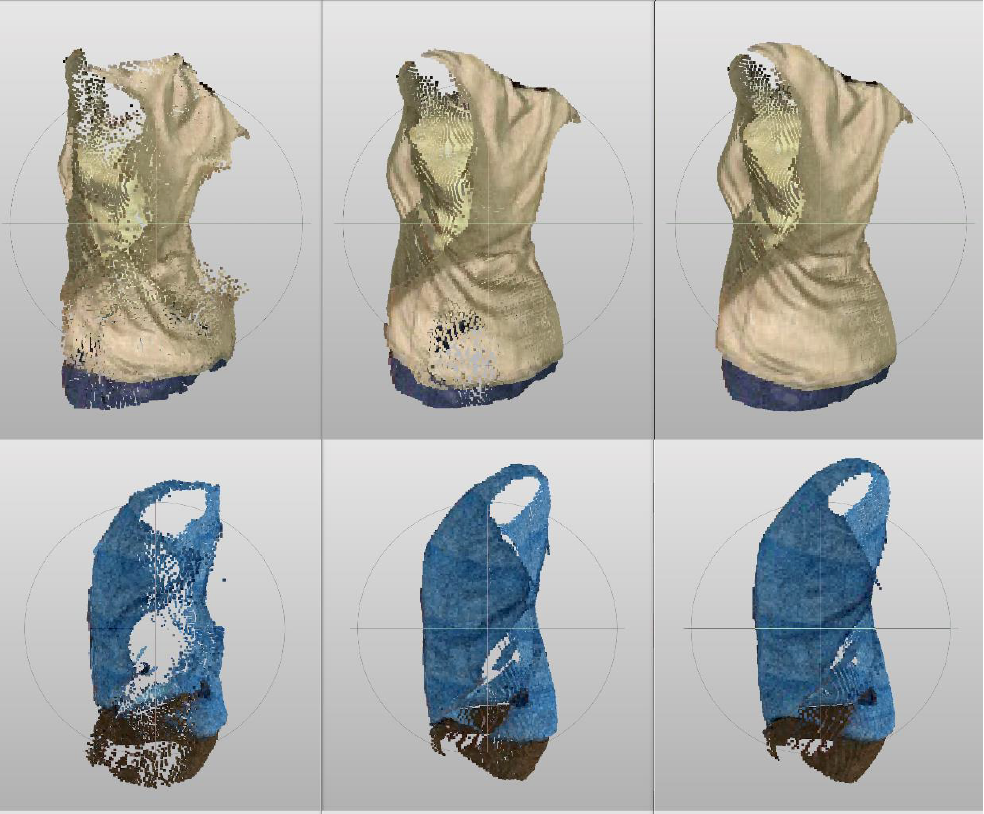

Yanni’s thesis explored novel use cases in the pastry design domain for Social VR, developing and evaluating a prototype of a social VR tool for clients to co-design cakes with pastry chefs. Exploring such use cases can help better understanding the exploitation opportunities for social VR. Ignacio’s thesis investigated how body part segmentation can aid in performing temporal interpolation for dynamic point clouds. Body part segmentation can be used to improve the accuracy of the neural network in dealing with parts moving at different speed. Moreover, the same principle can be applied in improving compression efficiency for point cloud transmission, by adopting a different level of detail based on salient parts.

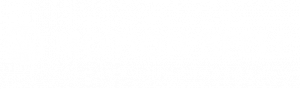

Yanni Mei successfully defended her master’s thesis on August 20th, 2020. The motivation behind this project was to support clients with limited design skills and pastry knowledge to better communicate and co-design their dreamed cakes with pastry chefs for special celebrations. The cake VR tool provides 3D visualization and facilitates intuitive gestural manipulation of the size and decoration of the virtual cakes, which can be used for both onsite and remote communication (Figure 1 and Figure 2). The project started with a series of ethnographic studies with five pastry chefs and four clients who had experiences in purchasing customized cakes. Then, based on the requirements gathered from the ethnographic studies, we designed and implemented a social VR prototype, which allows two users collaboratively design cakes in a shared virtual space, wearing head-mounted displays (HMDs). We also performed a user validation test with six clients and 3 pastry chefs to see to what extent the cake VR prototype meets the requirements. The prototype successfully meets the 7 (out of 10) requirements, and clients are able to design cakes and communicate the size, decoration and theme of the celebration of the cakes with pastry chefs.

Ignacio Reimat successfully defended his thesis on June 30th 2020. The motivation behind the project was to reduce the bandwidth requirements for transmission of dynamic point cloud. Reducing the temporal resolution of the sequence to be transmitted, results in notable bitrate gains; however, it comes at the expense of perceived quality. Performing temporal interpolation at the receiver side allows to cope with bandwidth requirements, while maintaining the appearance of smooth motion. In his thesis, Ignacio provided two main contributions: a) the creation of point cloud contents with annotated body parts, from a publicly available 3D mesh dataset, and b) an architecture capable of performing temporal interpolation of point cloud contents representing body parts. Results show that that applying body part segmentation and predicting the interpolation of individual body parts can improve the accuracy of point cloud temporal interpolation systems.

Yanni Mei, Design a SocialVR Tool for the Remote Co-Design of Customized Cakes (2020, TU Delft): https://repository.tudelft.nl/islandora/object/uuid%3A78a1147b-e97b-418f-a5e6-3ce944df4f49

Nacho Reimat, Temporal Interpolation of Human Point Clouds Using Neural Networks and Body Part Segmentation (2020, Universitat Politecnica de Catalunya): https://www.dis.cwi.nl/downloads/Masters-2020-Nacho.pdf

Author: CWI

Come and follow us in this VR journey with i2CAT, CWI, TNO, CERTH, Artanim, Viaccess-Orca, TheMo and Motion Spell.

This project has been funded by the European Commission as part of the H2020 program, under the grant agreement 762111.