Understanding and Measuring Social VR Experiences

By Jie Li, Francesca de Simone, and Pablo Cesar, CWI

VRTogether partner CWI has created a new protocol and set of metrics for evaluating social VR experiences with end-users. The protocol and metrics include both quantitative and qualitative aspects, such as a new questionnaire combining presence, immersion, and togetherness; and a set of objective metrics to analyse the behaviour of the user, focusing on speech analysis, head rotation, body movement, etc. This novel evaluation method has been iteratively developed and validated through a human-centred process, including two experiments (with around 100 users in total).

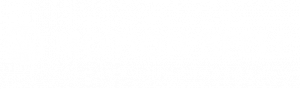

The first study was based on photo sharing in Social VR. We ran context mapping (N=10), an expert creative session (N=6), and an online experience clustering questionnaire (N=20), which resulted in a generalizable Social VR questionnaire that can measure three dimensions of experiences: Quality of Interaction (QoI), Social Meaning (SM) and Presence/Immersion (PI). We then ran a controlled, within-subject study (N=26 pairs) to compare photo sharing under F2F, Skype, and Facebook Spaces (see Figure 1). Using semi-structured interviews, audio analysis, and our Social VR questionnaire, we found that Social VR can closely approximate F2F sharing. This experiment contributes empirical findings on the differences of the experience when using different digital communication media. It also evaluates the new Social VR questionnaire, showing that the questionnaire items measuring the three dimensions of experiences (QoI, SM, PI) are properly constructed.

The second study aimed at develop and test both subjective and objective methodologies to evaluate and compare Social VR systems. This study (N=16 pairs) follows also a within-subjects design. This time, the case study was to watch together movie trailers. The experiment included three conditions: Facebook Spaces (with avatar representation of the users), the VRTogether Web-based system (with 2D real-time video representation of the users), and a face-to face condition (see Figure 2). The subjective assessment was the same as the first study, using the Social VR questionnaire and semi-structured interviews. This time we collected as well data on verbal interactions, visual patterns, and body movements, based on the log of the head rotation of the participants, the capture of the HMD viewport and audio channel, and the user’s body recording using a webcam. In other words, we collected objective data on:

- how much time participants spent looking at and speaking to each other;

- how much participants moved their body and head.

We found that, in terms of QoI and SM, the VRTogether system and the face-to-face condition were significantly higher rated than the Facebook Spaces. For PI, no statistically significant differences were found between the two Social VR systems.

From the interviews, 47% of the participants pointed out that the avatar representation in the Facebook Spaces is of low realism. They prefer the 2D video representation offered by the VRTogether system. Some participants complained about the fact of missing the eyes (37.5%), missing part of the self-representation (28.1%) in the VRTogether system, but others experienced no problems about missing eye contacts (40.6%). Approximately a quarter (21%) of the participants found annoying the controllers in Facebook Spaces. Half of the participants (50%) expressed preference toward the VRTogether system for watching movies together. Moreover, participants were generally satisfied with the virtual environment. 37.5% of them had a sense of “being there” in the virtual environments. Regarding the future improvement, suggestions were cumulated on the ergonomics of the HMD (28.1%), better user representation (21%) and wider field of view (12.5%).

These two studies are our first step towards the development of an experimental protocol for a new medium: social VR. We will keep you updated!